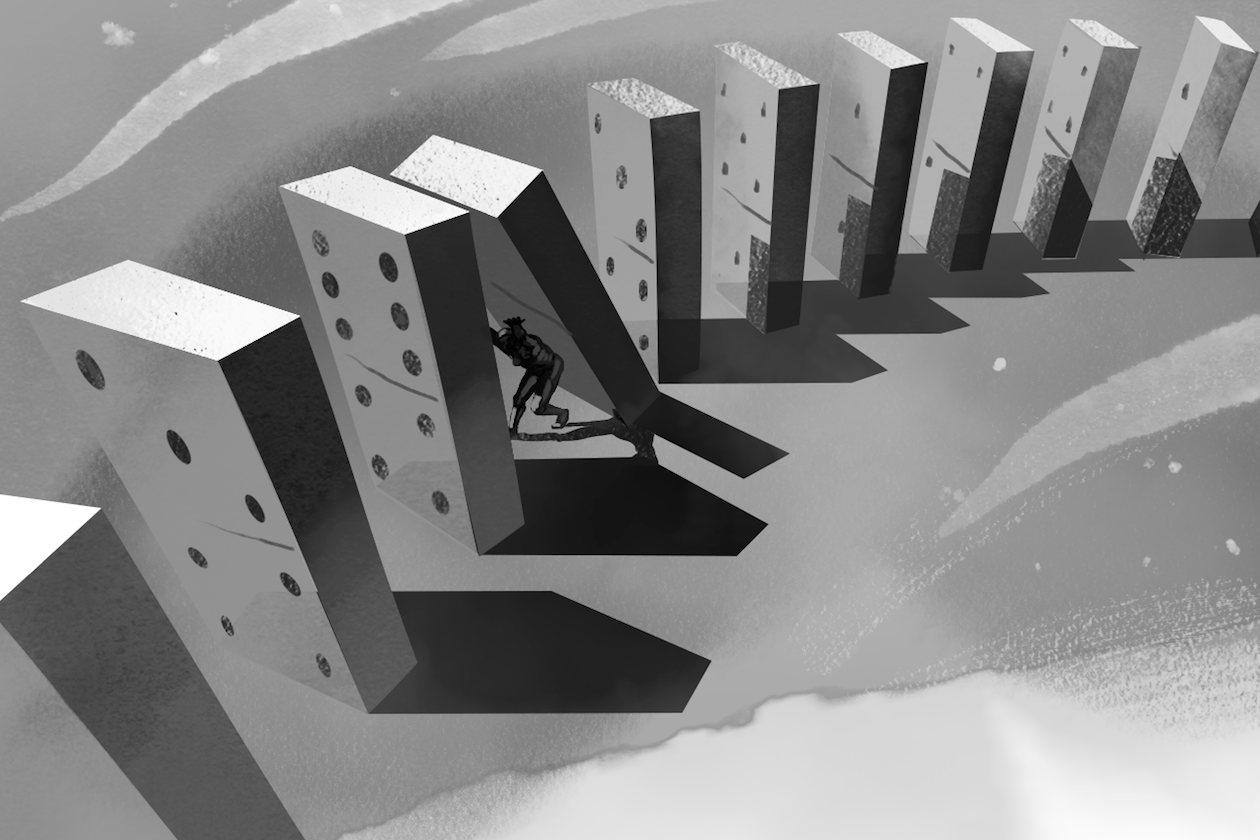

Simplification of cause-and-effect is a ubiquitous part of human nature. After an event occurs, it is easiest to point to the simplest notion as the lone cause. Ockham’s razor, or the notion that the simplest answer tends to be the most correct, likely hasn’t advanced our thinking as much as it has described our thinking. We tend to oversimplify by ignoring available information.

As humans, we tend to avoid inputting all of the available evidence into our decisions. One reason for this is obvious: it’s a lot harder. When there is myriad evidence leading to a given solution or action, it is easier to go with our “gut” or our emotions. Author, statistician and former trader Nassim Nicholas Taleb in his book titled The Black Swan: The Impact of the Highly Improbable calls the occurrence a “narrative fallacy.” He explains that we like to describe complex events and problems in simple terms, creating a convenient narrative about the cause. He describes it as “our vulnerability to over-interpret and our predilection for compact stories over raw truths. It severely distorts our mental representation of the world.”

This is especially true for complex systems, such as national economies. For example, investors often create a description that accounts for one or two reasons why a company is under- or over-performing. Maybe the new leadership has made significant strides in a short time. Maybe there was a specific tax or regulation that may or may not have contributed, but we assign all of the outcome to that single event. While sometimes accurate, the single outcome narrative comes at the expense of other possibilities.

Economies are wildly swung in various directions based on stockholders’s behaviors resulting from such narratives. We may wonder after the fact, why did we not see this coming, it was so obvious? The fallacy comes when the narrative then informs future decisions on incomplete information that likely have nothing to do with predicting the next “similar” event. As the human mind creates inherent biases, our narratives may subconsciously support existing beliefs and discount other information as it is obtained, thus leading to a false certainty over future outcomes.

We certainly fall into this mode of thinking as physicians as well. The human body is so immensely complex, with such incredible variation from person to person, that we try to get to an explanation as quickly as possible. Whether our assessment is accurate or not, we tend to then take this as truth and predictive for the next time we see such a condition clinically. But our perception of how good we are at predicting the course of illness is often lacking, especially considering that health and function is frequently swung in the face of large, catastrophic events that routinely defy prediction. We are getting better at this, slowly but surely, but our confidence in foresight outpaces our actual knowledge. The big problem comes with how we then perceive similar events going forward since it often gives us the perception that we have an answer when we may not.

Don’t misunderstand me — I am not suggesting that we avoid seeking for answers. For relatively simple issues in medicine, we tend to do a very good job of predicting course and treatment of the illness. The need for awareness of the issue is to check our thinking and recognize that we often don’t know as much as we think we do. We should not alter our thinking of accepting one single outcome at the expense of considering others. We often work around this by building our differential diagnoses. I don’t know about you, but sometimes I get a bit lazy in my thinking surrounding other potential causes of symptoms or illness. Including this mental exercise benefits the patient and the physician in bettering our care by improving our thinking.

The narratives we create in our head need to be based in facts in order to achieve the best outcomes. Sometimes it is based on experience, sometimes on more empirical evidence, but ensuring that we consider multiple factors and possibilities in our thinking can lead to much better outcomes for our patients.

Dr. Kyle Bradford Jones is a board-certified family physician at the University of Utah School of Medicine. He practices at the Neurobehavior HOME Program, a patient-centered medical home for individuals with developmental disabilities. He is very interested in how technology and social media can be used to improve overall health and clinical care.

Dr. Jones is a 2018–2019 Doximity Author.